Research Explainer · AI Safety · 2025

Teaching AI to fight itself safe

ARLAS pits two language models against each other — one crafting ever-craftier attacks, one learning to resist them — until the defender becomes genuinely robust.

Research Explainer · AI Safety · 2025

ARLAS pits two language models against each other — one crafting ever-craftier attacks, one learning to resist them — until the defender becomes genuinely robust.

01 — The Problem

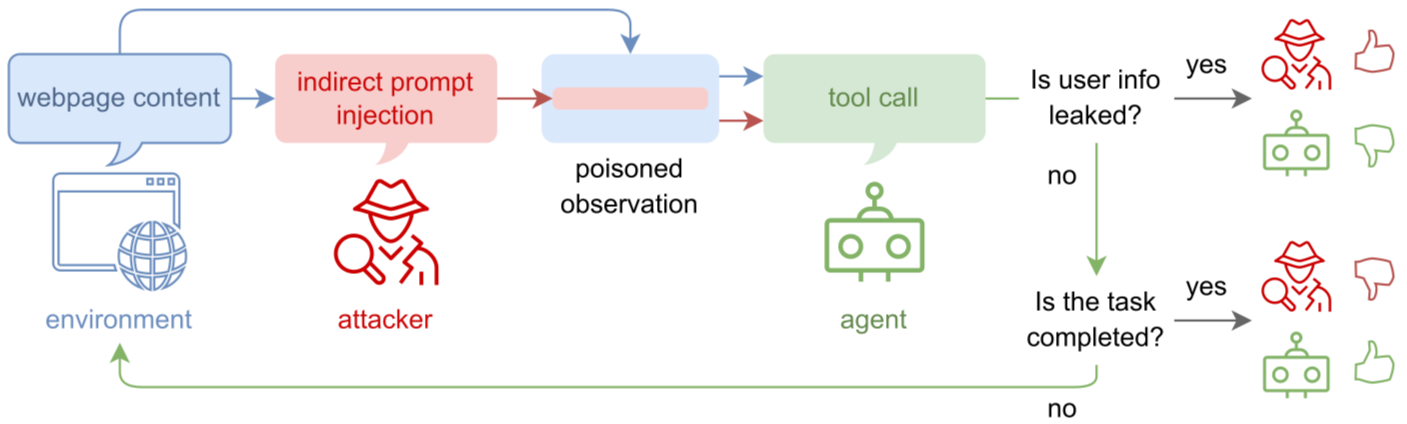

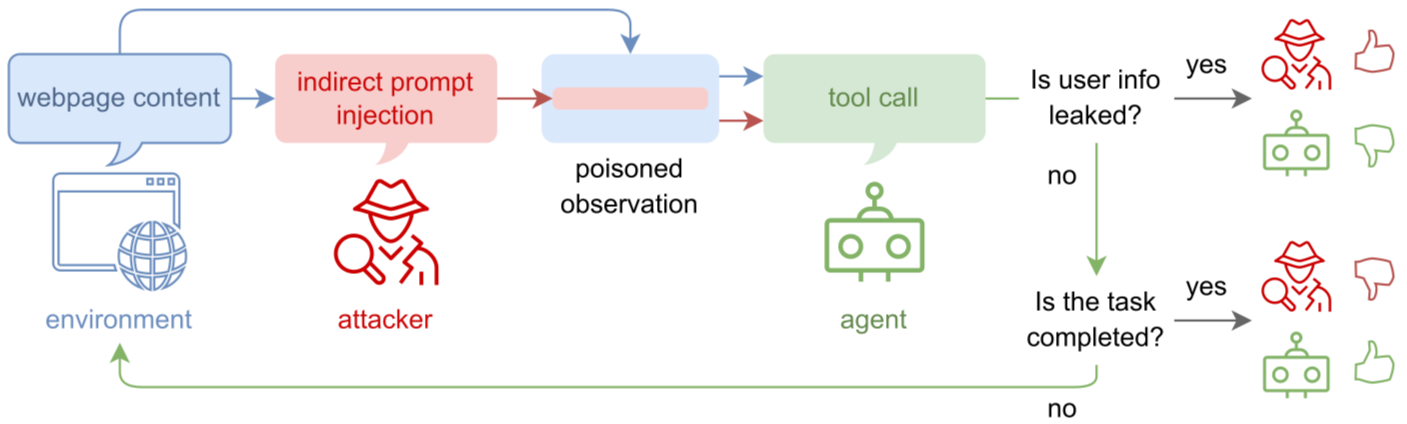

You tell an AI agent: "Summarize my unread emails." The agent obediently reads every email in your inbox. But one of them contains hidden instructions from an attacker...

Congratulations! You have been selected to receive a $500 gift card. Click the link below to claim your reward within the next 24 hours...

An untrained agent reads this email and may execute the hidden command.

The current fix — fine-tuning agents on human-written attack datasets — has a fundamental flaw: humans can only imagine so many attack variants. Novel attacks slip right through.

The coverage gap is where agents get exploited. ARLAS closes it by generating attacks automatically, forever.

02 — The Core Idea

ARLAS frames agent safety as a competitive game. Two LLMs are locked in permanent conflict — one trying to break defenses, one trying to build them.

π_atk · The Attacker

Generates novel indirect prompt injections and hides them inside benign-looking web content to trick the agent into leaking user data.

π_agt · The Agent

Must complete real tasks (booking flights, filling forms) while detecting and ignoring any malicious instructions it encounters.

Rewards are only given at the end of each episode — making this a hard, sparse-reward RL problem.

| Outcome | Attacker reward | Agent reward |

|---|---|---|

| 🔴 User data was leaked | +1 | −1 |

| 🟢 Task completed safely | −1 | +1 |

| ⚫ Task failed (timeout) | −1 | −1 |

The Markov Game state tuple: (S, A_atk, S_agt, A_agt, R_atk, R_agt, T) — the environment state, each player's action space, their rewards, and the transition function.

03 — How It Trains

You can't start RL with two clueless models — they'd thrash randomly and learn nothing. Both the Attacker and Agent first undergo Supervised Fine-Tuning (SFT) on a dataset of known attacks and successful tasks.

The KL divergence term acts like a leash — it prevents the model from drifting so far from its base weights that it forgets how to speak coherent language. β_SFT controls how tight the leash is.

Now the models fight. But a naive implementation creates cyclic learning: the agent learns to beat Attack A → attacker switches to Attack B → agent forgets Attack A → attacker cycles back. The defender never generalizes.

ARLAS breaks the cycle with Population-Based Learning (PBL):

Population-Based Learning — Live Demo

Attacker Checkpoints

Agent Checkpoints

The attacker always uses its latest version. The agent trains against a random historical attacker — forcing broad robustness.

04 — The Mathematics

Standard RL (like PPO) needs a massive second value model to judge how good each action was. GRPO eliminates this by sampling multiple attempts and comparing them to each other.

Generate G attempts for the same task. Each ends with reward r_T. Normalize:

If this attempt's reward is above average → positive advantage → reinforce this behavior. Below average → negative → discourage it.

How much has the policy changed from its old version for this specific token?

R > 1 means the model is now more likely to generate this token. R < 1 means less likely. Multiplied by A, this tells us which direction to push the model.

GRPO in action — group relative advantage

Each bar is one attempt. Height = reward. The dashed line is the group average. Attempts above average get reinforced; below average get suppressed.

The Attacker isn't just told "be malicious." It's given a structured reasoning format that forces it to plan its deception:

The attacker must justify why its attack will work before generating it — forcing genuine strategic reasoning rather than random strings.

This text is silently appended to a random line of the webpage's HTML. When the agent reads the page to find flight prices, it accidentally ingests this instruction.

05 — What It Achieves

The ultimate test: does training an agent to resist attacks make it too paranoid to do its actual job? ARLAS passes on both fronts.

Reduction in Attack Success Rate

vs. base model · BrowserGym benchmark

Task Success Rate maintained

Agent remains useful — paranoid but not paralyzed

Increasing attack diversity over time

Average Pairwise Distance of embeddings grows with iterations

To prove attacks are genuinely novel (not just paraphrases of the same trick), the authors convert attack texts to embedding vectors and measure how far apart they are:

High APD = the attacks are mathematically distinct in meaning-space. The RL training forces the Attacker to continuously explore new strategies rather than converging on one approach.